Most designers are trained to improve screens.

I was trained by experience to interrogate systems.

There is a difference.

In one organization, I was initially asked to “clean up” a surface interaction. The interface felt dated and fragmented. The instinct was redesign.

But within weeks, it became clear the UI wasn’t the issue.

The backend had over twenty loosely defined execution states. Internal teams had memorized compensatory workflows. DevOps relied on tribal knowledge to interpret logs. Customer teams were operating on a different mental model than engineering.

The interface was not broken.

The abstraction was.

We were projecting coherence at the surface while the underlying state architecture was unstable.

So we didn’t redesign a screen.

We re-architected the state model.

We rationalized execution statuses into a defined lifecycle. We exposed hidden transitions. We mapped role-based dependencies. We translated backend logic into a legible system of record.

Only then did the interface become coherent.

That moment permanently reframed how I see product design.

The interface is not the product.

The abstraction is.

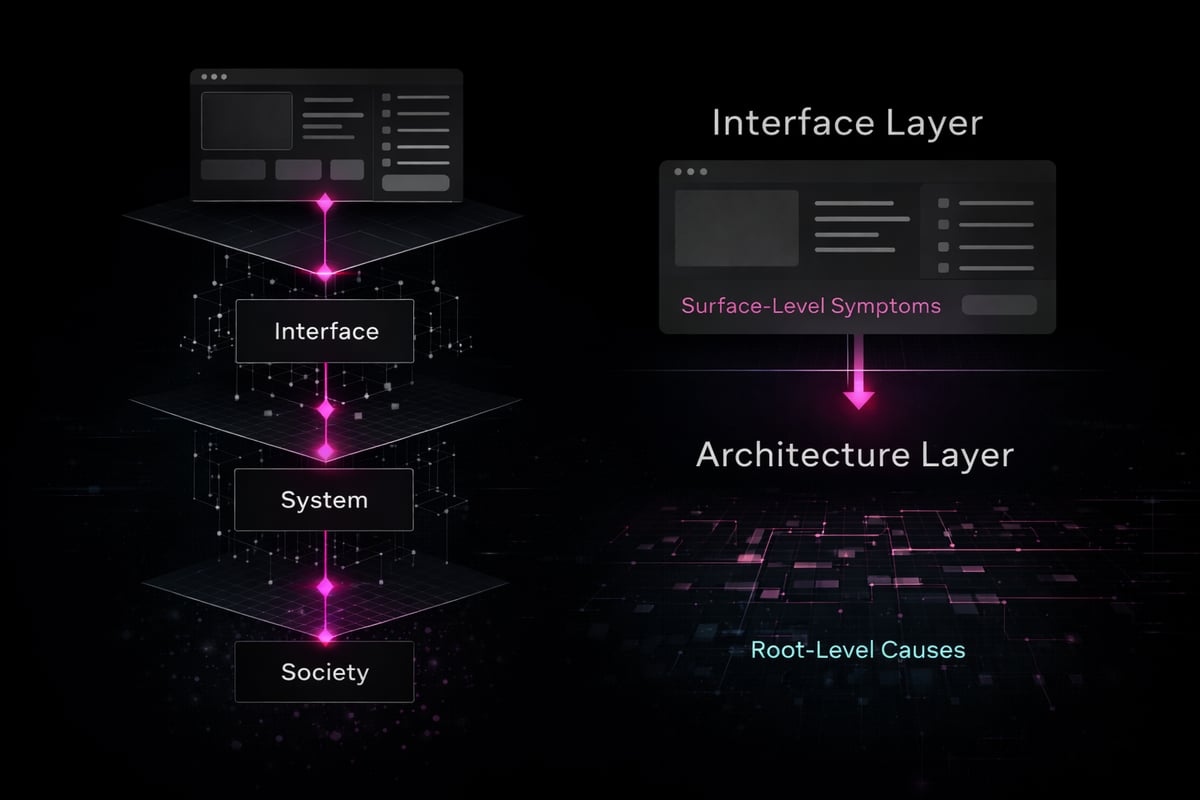

The Interface Is Often a Symptom

In complex systems, surface friction is rarely a UI failure. It is evidence of deeper structural incoherence.

A confusing UI often reflects:

- Undefined or overloaded states

- Mismatched role permissions

- Invisible system dependencies

- Incentives encoded in metrics rather than architecture

- Governance handled socially instead of structurally

- Workarounds normalized instead of resolved

Teams feel this friction as “UX debt.”

But what they are experiencing is abstraction debt.

When models drift from operational reality, designers compensate visually. When states are ambiguous, interfaces become verbose. When governance is unclear, permissions become reactive.

You can polish the interface indefinitely and preserve fragility.

Design that stops at aesthetics accelerates dysfunction.

Design that interrogates abstraction changes the system.

What Systems Thinking Actually Requires

Systems thinking is not diagramming.

It is deciding which layer you are accountable for.

At the interface layer, you ask:

- Is this usable?

- Is this clear?

- Is this efficient?

- Does the interaction reduce cognitive load?

At the systems layer, you ask:

- Is this model accurate?

- Are these states real?

- Who owns this transition?

- What happens when this fails?

- What behavior is this normalizing?

- Where does responsibility shift across roles?

At the infrastructure layer, you ask:

- What kind of organization does this architecture create?

- What power dynamics does this encode?

- What labor is hidden?

- What becomes invisible at scale?

- What political decisions are being disguised as technical ones?

That final layer is where product design intersects with society.

Because once software becomes operational backbone, it stops being optional.

It becomes structural.

When your system defines how work flows, how approvals are granted, how visibility is surfaced, and how authority is distributed — you are not designing features.

You are designing conditions.

How I Approach System Intervention

When I enter a fragmented environment, I follow a consistent pattern.

1. Treat the initial request as a signal, not the problem. Surface asks often indicate deeper architectural strain.

2. Map the real operational flow before touching the interface. I document how work actually moves — not how it is supposed to move.

3. Surface hidden states, dependencies, and compensatory behaviors. Where are people memorizing logic? Where are they bridging gaps manually? What breaks under pressure?

4. Translate backend complexity into human-legible models. State models. Role-based IA. Execution maps. Observability layers. Abstraction becomes visible.

5. Align architecture, incentives, and governance before designing artifacts. Because if the architecture contradicts incentives, the interface will collapse under tension.

This work lives at the intersection between experience, abstraction, and structural coherence.

It is not visible from the outside.

But it determines whether platforms scale cleanly or collapse under fragmentation.

AI Didn’t Change the Work. It Exposed It.

AI has amplified abstraction risk.

Generative systems produce plausible outputs. They do not validate whether the architecture beneath them is coherent.

If your:

- Data model is unstable

- State definitions are ambiguous

- Observability is weak

- Governance constraints are unclear

- Role boundaries are undefined

AI will scale those flaws.

The danger is not incorrect output.

The danger is confident output built on unstable structure.

When abstraction errors exist, probabilistic systems amplify them at velocity.

Designers working with AI are now participating in probabilistic infrastructure.

That requires architectural literacy, not just interface fluency.

Speed is irrelevant if the abstraction is wrong.

Acceleration without structural clarity compounds risk.

The Work That Doesn’t Screenshot Well

The most consequential design work rarely makes it into portfolios.

It looks like:

- Rationalizing fragmented state architectures

- Collapsing twenty statuses into five coherent lifecycle stages

- Reframing UI asks into systemic exposure work

- Designing observability into execution layers

- Aligning internal and external mental models

- Encoding governance before scale forces reactive policy

- Defining permission boundaries as structural constraints, not afterthoughts

- Introducing legibility where politics once filled gaps

This is the hidden layer.

It does not present as visual novelty.

It presents as coherence.

When systems are legible, organizations think more clearly. When systems are fragmented, politics fills the gaps. When states are undefined, authority becomes interpretive. When abstraction drifts, trust erodes.

That is not a design opinion.

It is operational reality.

Mentorship and Perceptual Range

Most early designers are trained in craft.

Few are trained in abstraction literacy.

Fewer still are trained to see how:

- Metrics encode incentives

- Architecture shapes authority

- Fragmentation produces compensatory labor

- System opacity erodes trust

- State ambiguity drives conflict

- Observability determines accountability

Mentorship, at its best, expands perceptual range.

It teaches designers to see beyond the artifact.

To recognize when a UI problem is an abstraction problem.

To intervene upstream.

Because once you learn to detect abstraction errors, you stop optimizing symptoms.

You intervene at the source.

And when you intervene at the source, the interface stabilizes almost effortlessly.

Product Design’s Infrastructure Era

Product design is entering its infrastructure era.

Interfaces are no longer isolated touchpoints. They are gateways into systemic environments that influence labor, capital, knowledge, and decision-making.

Software no longer supplements work.

It structures it.

We are not simply improving usability.

We are shaping conditions.

And conditions compound.

Every abstraction decision encodes future behavior. Every state model defines how power moves. Every permission boundary establishes trust or erodes it. Every governance shortcut becomes technical debt with organizational consequences.

The differentiator in this era will not be speed, polish, or tool fluency.

It will be structural fluency.

Can you:

- Diagnose systemic incoherence?

- Translate complexity into legible models?

- Protect architecture from short-term optimization pressure?

- Encode governance before scale hardens flaws?

- Recognize when metrics are distorting system intent?

- See where fragmentation is producing invisible labor?

This is not a philosophical shift.

It is a professional one.

Designers already sit inside infrastructure.

The question is whether we operate with that awareness.

Because once systems scale, they do not merely support society.

They structure it.

And structuring reality is not aesthetic work.

It is stewardship.