On 10 Nov 2022, CISA (Cybersecurity and Infrastructure Security Agency of US) published its methodology for vulnerability categorization.

As stated in Executive Assistant Director (EAD) Eric Goldstein's blog post Transforming the Vulnerability Management Landscape implementing a methodology, such as SSVC, is a critical step to advancing the vulnerability management ecosystem

OVERVIEW

The CISA Stakeholder-Specific Vulnerability Categorization (SSVC) is a customized decision tree model that assists in prioritizing vulnerability response for the United States government (USG), state, local, tribal, and territorial (SLTT) governments; and critical infrastructure (CI) entities. The goal of SSVC is to assist in prioritizing the remediation of a vulnerability based on the impact exploitation would have on the particular organization(s). The four SSVC scoring decisions, described in this post, outline how CISA messages out patching prioritization. Any individual or organization can use SSVC to enhance its own vulnerability management practices.

THE VULNERABILITY SCORING DECISION

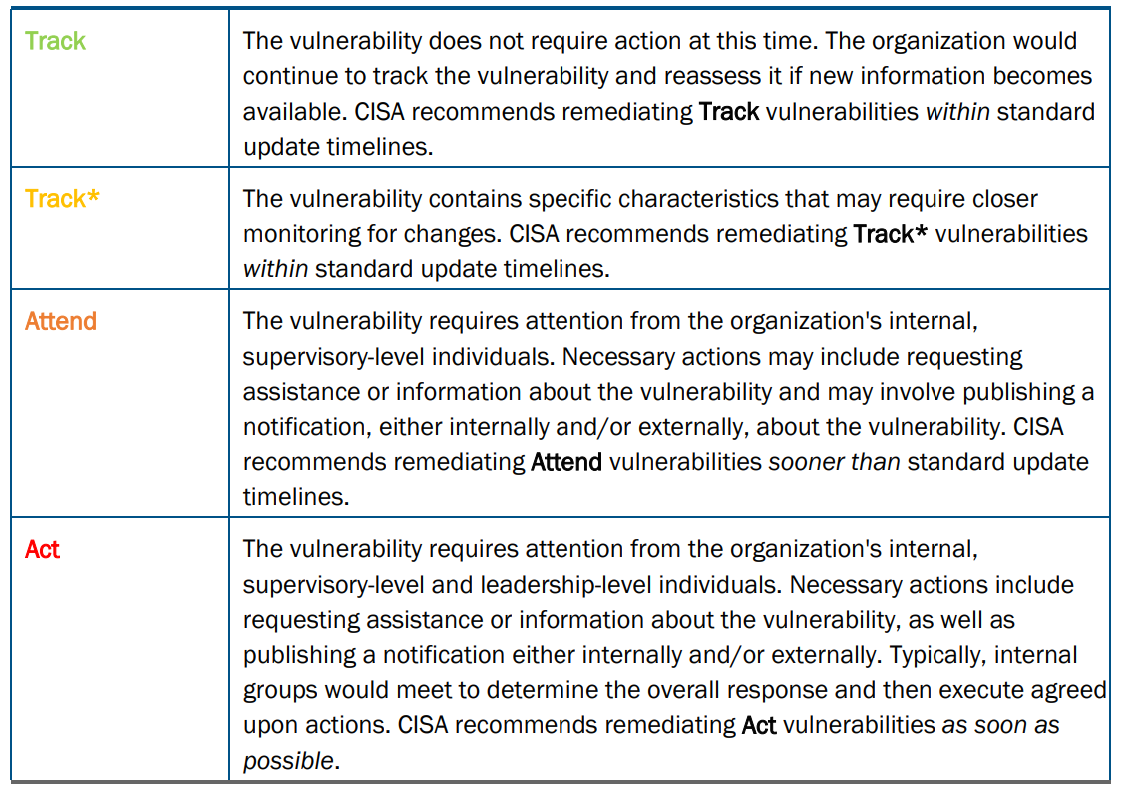

CISA uses its own SSVC decision tree model to prioritize relevant vulnerabilities into four possible decisions:

The CISA SSVC tree determines the decisions of Track, Track*, Attend, and Act based on five values, which are briefly explained as follows:

Exploitation status

Evidence of Active Exploitation of a Vulnerability.

This measure determines the present state of exploitation of the vulnerability. It does not predict future exploitation or measure feasibility or ease of adversary development of future exploit code; rather, it acknowledges available information at the time of analysis.

it has three values :

- None: There is no evidence of active exploitation and no public proof of concept (PoC) of how to exploit the vulnerability.

- Public PoC: One of the following is true: (1) Typical public PoC exists in sources such as Metasploit or websites like ExploitDB; or (2) the vulnerability has a well-known method of exploitation. Some examples of condition (2) are open-source web proxies that serve as the PoC code for how to exploit any vulnerability in the vein of improper validation of Transport Layer Security (TLS) certificates, and Wireshark serving as a PoC for packet replay attacks on ethernet or Wi-Fi networks.

- Active: Shared, observable, and reliable evidence that cyber threat actors have used the exploit in the wild; the public reporting is from a credible source.

Technical Impact

Technical Impact of Exploiting the Vulnerability

The technical impact is similar to the Common Vulnerability Scoring System (CVSS) base score’s concept of “severity.”

When evaluating technical impact, the definition of scope is particularly important.

It is to be noted that the blind usage of CVSS system in the risk analysis process does not work properly, and the CVSS score methodology should be applied in the context of each project and then consequently applying the patch management based on the criticality of the risk makes more sense. In this way categorization of the vulnerabilities can help organizations to prioritize their countermeasures against any analyzed vulnerability.

The values of technical impact in SSVC methodology are:

- Partial : One of the following is true: The exploit gives the threat actor limited control over, or information exposure about, the behavior of the software that contains the vulnerability; or the exploit gives the threat actor a low stochastic opportunity for total control. In this context, “low” means that the attacker cannot reasonably make enough attempts to overcome obstacles, either physical or security-based, to achieve total control. A denial-of-service attack is a form of limited control over the behavior of the vulnerable component.

- Total : The exploit gives the adversary total control over the behavior of the software, or it gives total disclosure of all information on the system that contains the vulnerability.

Automatable

Automatable represents the ease and speed with which a cyber threat actor can cause exploitation events.

Automatable captures the answer to the question, “Can an attacker reliably automate, creating exploitation events for

this vulnerability?” Several factors influence whether an actor can rapidly cause many exploitation events. These

include attack complexity, the specific code an actor would need to write or configure themselves, and the usual

network deployment of the vulnerable system.

As it is guessed only two values could be accepted for this parameter:

- No: Steps 1-4 of the kill chain—reconnaissance, weaponization, delivery, and exploitation—cannot be reliably automated for this vulnerability. Examples for explanations of why each step may not be reliably automatable include: (1) the vulnerable component is not searchable or enumerable on the network, (2) weaponization may require human direction for each target, (3) delivery may require channels that widely deployed network security configurations block, and (4) exploitation may be frustrated by adequate exploit-prevention techniques enabled by default (address space layout randomization [ASLR] is an example of an exploit-prevention tool).

- Yes: Steps 1-4 of the kill chain can be reliably automated. If the vulnerability allows unauthenticated remote code execution (RCE) or commands injection, the response is likely yes.

Another way of thinking about automatable is determining what barriers are in place that prevents the vulnerability

from being wormable. One effective barrier is enough to get in a No answer. For example, if a user needs to be

authenticated and logged in. Another way could be not having a connection with the internet and so on.

Mission prevalence

Impact on Mission Essential Functions of Relevant Entities

A mission essential function (MEF) is a function directly related to accomplishing the organization’s mission as outlined in its statutory or executive charter.

- Minimal: Neither support nor essential application. The vulnerable component may be used within the entities, but it is not used as a mission-essential component, nor does it provide impactful support to mission-essential functions.

- Support: The vulnerable component only supports MEFs for two or more entities.

- Essential: The vulnerable component directly provides capabilities that constitute at least one MEF for at least one entity; component failure may (but does not necessarily) lead to overall mission failure.

Public well-being impact

Impacts of Affected System Compromise on Humans

Safety violations are those that negatively impact well-being. SSVC embraces the Centers for Disease Control (CDC)

expansive definition of well-being, one that comprises physical, social, emotional, and psychological health.

for more detailed information about SVCC go to : https://www.cisa.gov/sites/default/files/publications/cisa-ssvc-guide%20508c.pdf